Compare GPU Performance on AI Workloads

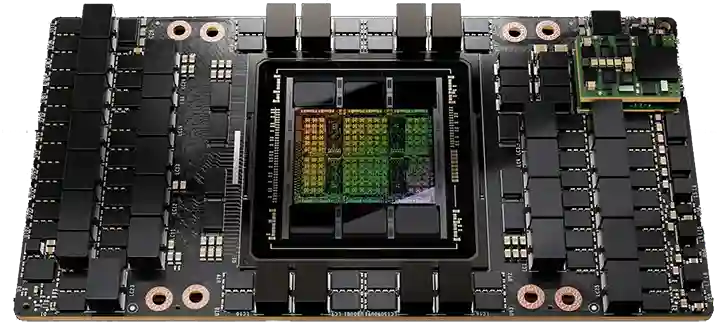

A40

A40

A40Vs.

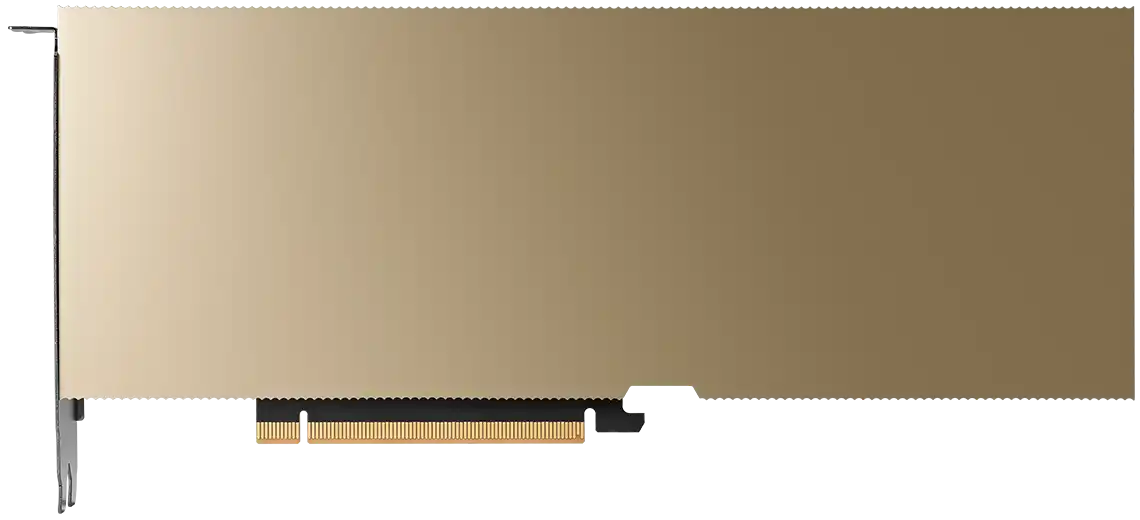

H100 PCIe

H100 PCIe

H100 PCIeLLM Benchmarks

Benchmarks were run on RunPod gpus using vllm. For more details on vllm, check out the vllm github repository.

Output Throughput (tok/s)

meta-llama/Llama-3.1-8B-Instruct

128 input, 128 output

1

Output Throughput (tok/s)

Get started with RunPod

today.

We handle millions of gpu requests a day. Scale your machine learning workloads while keeping costs low with RunPod.

Get Started